Most companies struggle to move generative AI for business beyond a simple demo. MIT research shows that only 5% of firms grow revenue because they move past pilot purgatory and solve the learning gap where models fail to adapt. You need AI-ready data to avoid a high AI project failure rate.

BCG found that leaders grow 1.5 times faster by fixing execution gaps. A successful generative AI production deployment requires deep AI workflow integration. Stop testing prompts. Start scaling generative AI. This guide shows you how to use generative AI for business to impact your bottom line.

A pilot is a playground. Production is a battlefield. Your generative AI for business app works in a lab because you control every variable. When you start scaling generative AI, that control vanishes. Moving to a live environment exposes every weak link in your tech stack.

Many teams hit pilot purgatory because they ignore the messy reality of live systems. A 2026 survey shows 78% of leaders run agents, but only 14% reach generative AI production deployment. This GenAI pilot failure usually happens because you lack AI-ready data that matches what users actually type.

You need enterprise AI scaling that accounts for legacy tech. Without a clear AI governance framework, projects sit on a shelf. This lack of AI workflow integration is why most generative AI for business stays small.

Standard software stays static after you ship it. Generative models act differently when they hit new data. Many leaders cause a high AI project failure rate because they think of deployment as a finish line.

To succeed with generative AI for business, you need MLOps for generative AI. You have to track model drift detection and use LLMOps to keep things running. If you treat scaling generative AI like a one-time update, the model will degrade fast.

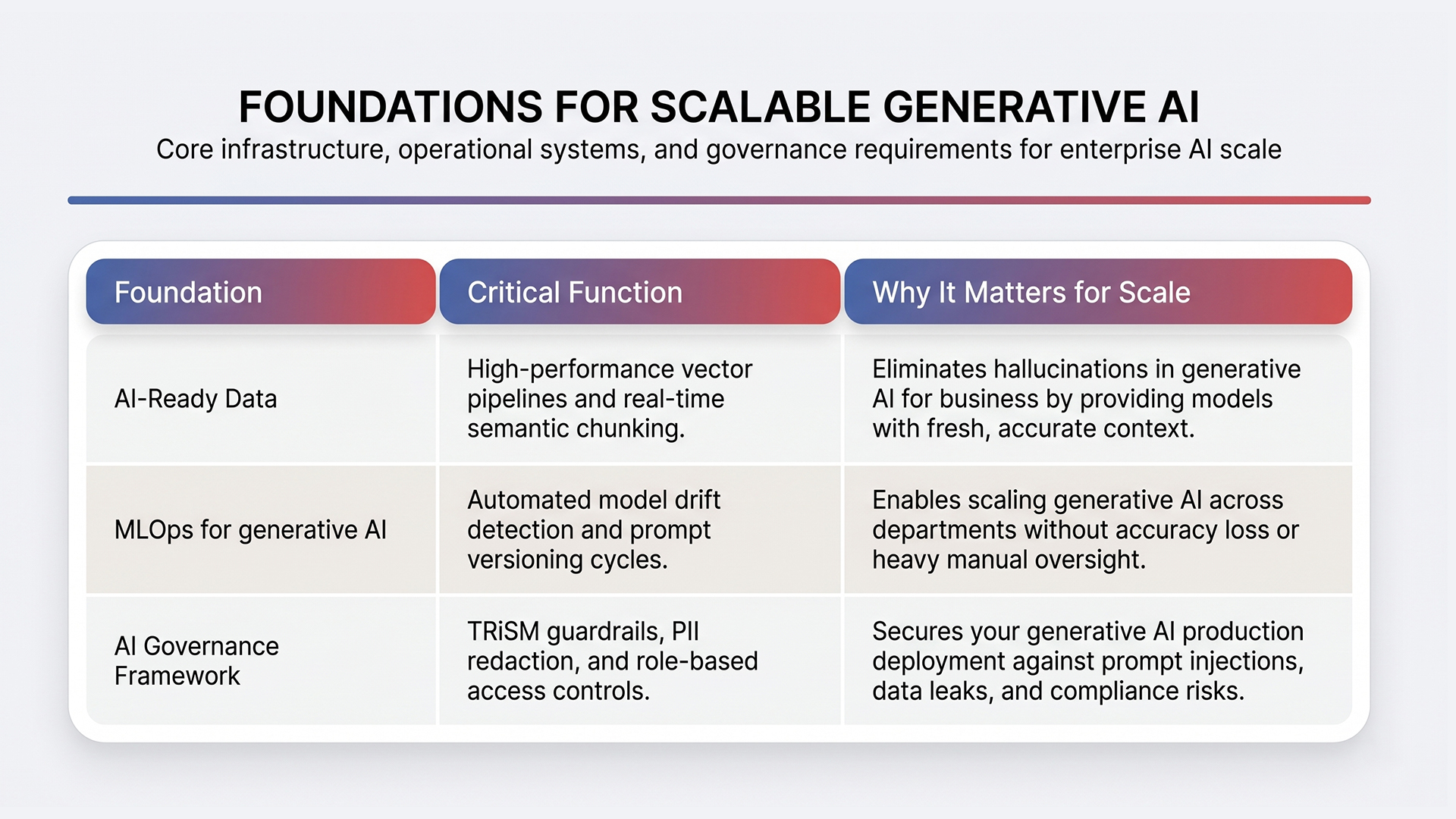

Successful generative AI production deployment requires a cycle of constant testing. You must maintain generative AI for business just like you manage a human team. These failures happen because firms forget that generative AI for business relies on three specific pillars.

Smart leaders stop obsessing over model names and start fixing the plumbing. A model is only as smart as the infrastructure supporting it. To avoid a high AI project failure rate, you must build these two pillars before your first customer interacts with the bot. These foundations make scaling generative AI possible without breaking your budget or your brand.

Most companies fail because they treat data for generative AI for business like a standard SQL database. True AI-ready data in 2026 requires a high-performance vector pipeline that handles unstructured content like PDFs, Slack logs, and emails.

If your Retrieval-Augmented Generation (RAG) system uses stale context, your generative AI production deployment will hallucinate. You need automated semantic gates that check for data quality before information reaches the model.

This prevents a GenAI pilot failure caused by “garbage-in, garbage-out” scenarios. Your data must be fresh, chunked correctly, and searchable by intent, not just keywords.

Building a lab demo is easy, but enterprise AI scaling requires an industrial-grade factory. You need MLOps for generative AI to handle AI workflow integration across your entire company.

This includes LLMOps tools to track prompt versioning and token costs in real-time. Without model drift detection, your AI will slowly lose accuracy as user patterns change, leading back to pilot purgatory.

A strong AI governance framework is also vital. It must redact sensitive PII and block prompt injections automatically. This setup ensures generative AI for business stays secure and cost-effective as you grow.

Think of an AI governance framework as your steering and braking system. You need it to manage the specific risks that come with enterprise AI scaling, such as data leaks or prompt injection. You should not try to add security after your generative AI production deployment is already live.

Successful generative AI for business uses a Trust, Risk, and Security Management (TRiSM) setup to block IP exposure. This includes setting up role-based access and output guardrails to stop sensitive data from leaving your secure network. These rules keep your company safe while scaling generative AI across multiple teams.

Setting up these three foundations allows you to follow a clear path to a live launch without the usual roadblocks.

Moving to a live environment requires a steady hand. You need a sequence that removes technical debt before it becomes a permanent problem.

Many leaders fail because they try to do everything at once. Focus on one high-impact area first to avoid a GenAI pilot failure. MIT experts suggest that buying or partnering for solutions works twice as often as building from scratch. This approach helps you avoid pilot purgatory by using tested tools.

Once you prove the ROI of generative AI for business in one department, you can begin scaling generative AI elsewhere. Document your wins early to build the trust needed for enterprise AI scaling.

Waiting until after launch to fix rules is a recipe for an AI project failure rate spike. You must standardize your AI governance framework early to handle audit trails and bias controls. This keeps you in line with rules like GDPR or HIPAA during your generative AI production deployment.

When everyone is responsible for AI, no one is. Assign a clear owner for each generative AI for business tool to ensure accountability. This structure supports AI workflow integration and keeps your systems reliable over time.

Following this sequence ensures your tech stays useful and safe as your company grows.

Most companies build a demo and then hit a wall. WebOsmotic acts as the bridge that moves generative AI for business from a lab experiment into a live environment. We fix the high AI project failure rate by focusing on AI-ready data and technical foundations that survive real-world use.

While many firms get stuck in pilot purgatory, WebOsmotic uses AI workflow integration to deliver measurable results. We have already built over 1,000 systems, including an AI-OMS that saved a US cafe chain 80 staff hours every month.

Our approach ensures your generative AI production deployment is stable and profitable.

Contact WebOsmotic to get a clear technical roadmap for scaling generative AI and turn your generative AI for business into a reliable daily system.

Winning with generative AI for business means you must shift focus toward operational rigor. Real value comes when you prioritize AI-ready data and MLOps for generative AI. Deep AI workflow integration helps you avoid a GenAI pilot failure.

High performers master scaling generative AI by building production-ready foundations. WebOsmotic helps teams build these stable systems so they avoid pilot purgatory. Our approach ensures your generative AI production deployment delivers a measurable impact on your profit and loss statements.

Build a stable generative AI production deployment with WebOsmotic and start scaling generative AI to drive real value from generative AI for business.

Most firms hit pilot purgatory because they lack AI-ready data. A high AI project failure rate happens when you ignore MLOps for generative AI. Without a clear owner, your generative AI for business demo fails during enterprise AI scaling attempts.

AI-ready data means your information is governed and checked in real-time. For a generative AI production deployment, data must be fresh. Stale info causes hallucinations, leading to GenAI pilot failure. Ensure your generative AI for business uses high-quality, searchable intent.

A solid generative AI production deployment usually takes 90 days. If you skip your AI governance framework, you might spend 18 months in pilot purgatory. Focus on scaling generative AI by fixing data early to avoid a high AI project failure rate.

MLOps for generative AI is the system that keeps models running. It uses LLMOps for prompt versions and model drift detection to catch errors. Without it, scaling generative AI fails as accuracy drops. It is vital for successful generative AI for business.

Winners use AI workflow integration to make tech a daily habit. They prioritize AI-ready data and a strong AI governance framework before launch. By scaling generative AI with partners, they avoid pilot purgatory and ensure a successful generative AI production deployment.